A few weeks ago, I found myself staring at four Claude Code terminal windows running simultaneously on my screen, each one showing a different AI agent working on a different project.

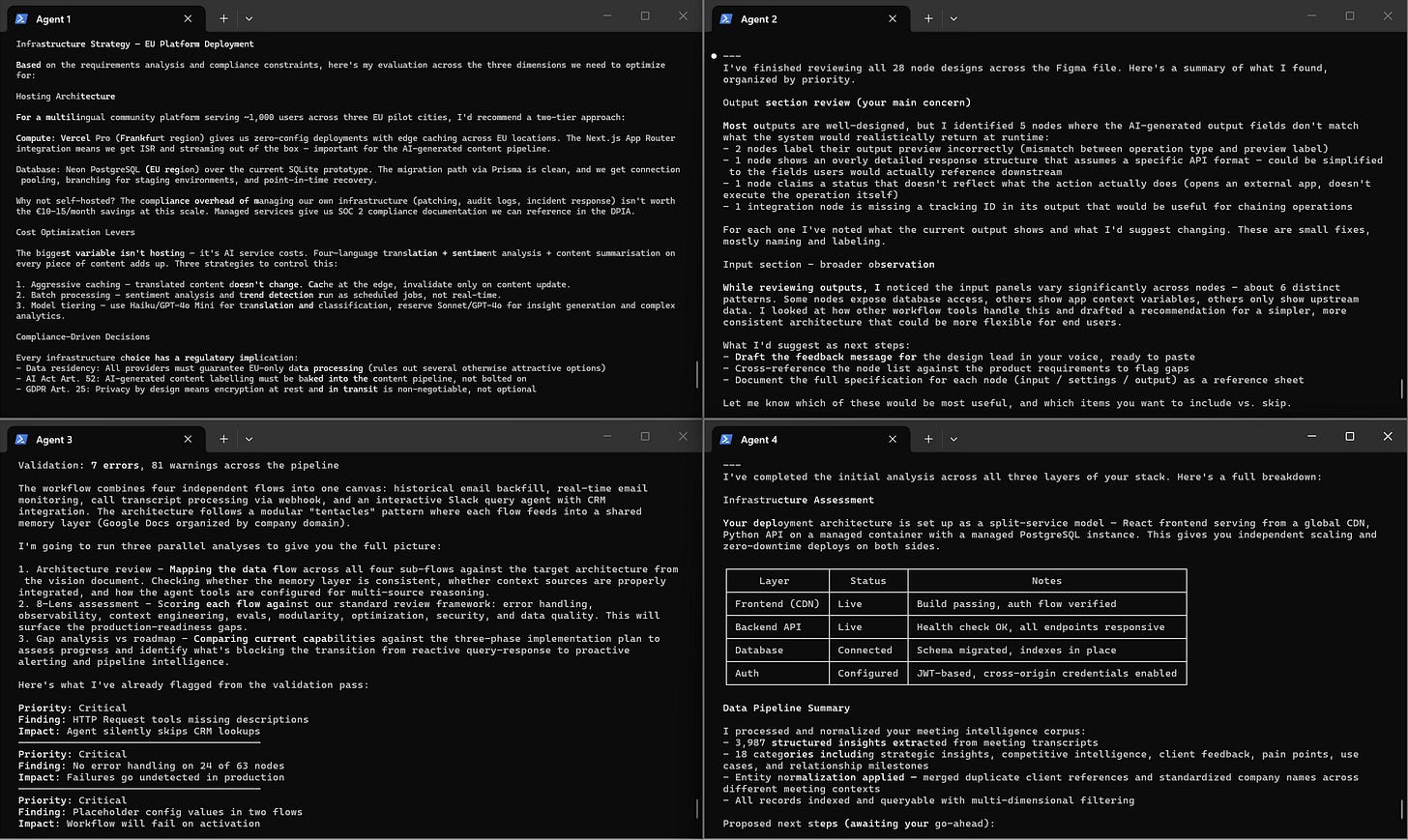

One was evaluating infrastructure options for a platform deployment, another was reviewing design specifications across a set of UI components, a third was auditing a complex automation workflow and flagging errors, and the fourth was assessing a full-stack application and mapping out its data pipeline.

I wasn’t writing code, I wasn’t answering prompts, I was checking in on progress the way you’d check in on a team of consultants you’d briefed that morning.

That moment stuck with me, not because the technology was new (I’d been using Claude Code for months at this point) but because I recognized the feeling.

It was the same feeling I’ve had as a consultant, managing small teams on client engagements: the mix of trust and vigilance that comes with handing off meaningful work to someone capable, knowing you’ll need to review the output but also knowing you don’t need to hover over every step.

Something had changed in how I was working with AI, and I think it’s worth talking about because I believe it has implications well beyond software development.

How we got here

This shift didn’t happen overnight, it was a gradual progression that only became obvious in hindsight.

Just a year ago, I was having conversations with AI the way most people still do: asking questions, getting answers, refining my prompts, copy-pasting the useful bits. It was a chatbot interaction, with added abilities such as searching the web, but the interaction model was essentially: you ask, it answers.

Then the relationship started evolving. I began working alongside AI in real time on technical projects, treating it more like a pair of hands that could keep up with my thinking. That was already a different dynamic, more collaborative, more like working with a capable colleague than querying a database.

The next step was contributing to meaningful automation work. It helped us build workflows for clients that handle entire processes, like screening incoming job applications, evaluating candidates against specific criteria, and sending personalized responses, without anyone touching them once they’re deployed.

And now, most recently, I’ve been running multiple AI agents in parallel across various projects, reviewing their work the way I’d review deliverables from a team.

The screenshot above is from a real working session, four agents, four projects, all progressing simultaneously while I focus on the parts that require human judgment.

I’m not the only one who’s noticed this shift away from plain chatbot conversation. Peter Steinberger, the creator of OpenClaw (the viral AI agent we wrote about two weeks ago), described a similar evolution on the Lex Fridman podcast recently (buckle up, it’s more than three hours long…).

He now delegates most of his coding work by voice, talking to his agent rather than typing prompts. He trusts his agent with the routine work, but anything sensitive gets his full attention.

The industry is moving in the same direction. Within a few weeks of each other this year, Anthropic released Cowork and OpenAI released Frontier.

Cowork is available inside Anthropic’s Claude Desktop app as a dedicated section, separate from chat: you describe what you want done, and Claude plans and executes multi-step tasks independently while you monitor progress. The name is evocative and Anthropic even describes it as a shift “from assistant to full collaborator”. Frontier takes a similar idea to target the enterprise: OpenAI calls it an “operating system” where AI agents and human employees share the same data, tools, and access controls, and where agents can run software, execute workflows, and make decisions autonomously.

Both products’ user interfaces were designed for delegation, and the fact that the two leading AI companies converged on this model independently tells us something about where this is all heading.

Why this is a management skill, not a technical one

Ethan Mollick, a professor at Wharton who has been studying how people actually use AI in their work, published a blog recently where he talks about “Delegation as the new prompting”.

In it, he lays out the documentation you need for effective AI delegation: define what you’re trying to accomplish and why, set the limits of authority, specify what “done” looks like, request interim outputs so you can course-correct, and establish what should be verified before you accept the final result.

Reading that list, I realized it’s not a prompting guide. It’s a management checklist, word for word how you’d brief a team member or a colleague before handing them a project.

According to estimates Mollick cites from OpenAI's research, this delegation approach can yield results roughly 40% faster and 60% cheaper than executing the work yourself, which tracks with what we’ve been seeing in practice.

Once you see it this way, it becomes easier to understand why so many people still struggle to get real value from AI tools that are now objectively very capable.

The tools are there. What’s missing is the practice of delegating effectively, something that many knowledge workers might simply never have had to develop before.

Delegation requires you to think clearly about what you actually want before you ask for it, to set boundaries without micromanaging, and to review output critically without redoing the work yourself.

This is exactly what Andrej Karpathy (the former head of AI at Tesla and co-founder of OpenAI) recently coined a term for: “agentic engineering”.

As he put it, “the new default is that you are not writing the code directly 99% of the time, you are orchestrating agents who do and acting as oversight.” He chose the word “engineering” deliberately, to emphasize that there is an art and science and expertise to it, that it’s something you can learn and become better at.

Karpathy is talking about software development here, but the principle also applies beyond code. Any knowledge work that can be broken down into a goal, a context, and a set of constraints will be able to be delegated this way.

What matters now is whether you can explain what you need clearly enough for AI to do it well, and it’s a new skill people will need to learn.

I’ve learned this by practicing. Good delegation, I’ve found, is about providing a clear goal, the right context, and broad guidelines (instead of rigid step-by-step instructions that end up biasing or limiting the output). The less you try to control the How, the better the output tends to be. But you need to define the What (goal) and the Why (context).

What we’re doing about it

At ElevAI, we’ve started reorganizing how we work based on this realization.

The first thing we changed was our documentation. Every project now has a context file, essentially a briefing document you’d hand to a consultant joining a project mid-way through: the background of the project, the constraints we’re working within, our conventions, examples of what good output looks like.

These documents are written for two audiences simultaneously: the human team members who might pick up the project, and the AI agents who might be working on it.

We’ve found that this dual-audience discipline actually improves the quality of our documentation overall, because vagueness that a human colleague might tolerate through context and intuition will produce genuinely poor results when an AI agent tries to act on it.

We’ve also started encoding our recurring processes into what we call Skills: reusable sets of instructions that capture how we approach specific types of work, whether it’s writing proposals, reviewing automation workflows, or preparing for client meetings.

The interesting side effect is that the exercise of writing these Skills has forced us to be much more explicit about our own methods than we ever were before. If you can’t write down what “good” looks like clearly enough for an agent to follow the instructions, you probably couldn’t delegate that task effectively to a human either, and acknowledging that has been a useful kind of humility.

We’re also thinking about how to help our clients experiment with this delegation mode for themselves. Tools like Claude Code or OpenClaw show that it is becoming a reality, but most organizations aren’t equipped to test those tools safely. This is why we’re exploring ways to offer sandboxed environments where our clients can test these tools securely, with the right guardrails and access controls in place, and start practicing their own delegation workflows on real tasks.

Where this leads

We’ve been working in AI long enough to recognize when a fundamental shift happens in the way people relate to the technology, and the move from conversation to delegation feels like one of those moments.

Our conviction is that the professionals and companies who learn to delegate to AI with the same rigor and discipline they’d bring to managing a talented team member will see their advantage compound. The leverage this provides is real, it’s already visible in our own work, and we believe it’s only going to grow as the tools improve.

![AI [re]Generation](https://substackcdn.com/image/fetch/$s_!L6aZ!,w_40,h_40,c_fill,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fd316565c-2791-4f13-a334-efcf2499510d_768x768.png)