Will we be able to predict the future in the next years?

On the gods we are building, the curse of Cassandra, and the one question nobody is asking.

I was walking on the beach in Bali just after sunrise. 6:30 am.

Barefoot. The air still cool. The light doing something extraordinary to the water and the reflection of that particular gold that only exists for about eleven minutes before the sun gets serious and turns everything flat and white.

And in the midst of such a godly moment, I had a thought I couldn’t shake.

We are building gods.

Yes. You heard that right. We are building gods.

Not gods in the religious sense but Gods in the ancient sense.

These gods are similar to Thor and Zeus, entities that see more than we see.

These gods hold more time in their awareness than we can hold.

These gods perceive patterns across distances and scales the human mind was simply never built to process.

And unlike the gods we invented to explain thunder and plague and the movements of stars, these ones are rooted in data.

These Gods don’t speculate,

they calculate.

You probably already know where this is going. These gods are Artificial.

These gods are….. (I’ll let you fill that it)

And while I kept walking, this thought kept walking with me.

But this is not the first time we’ve created something godly “in our image”,

We’ve actually been here before.

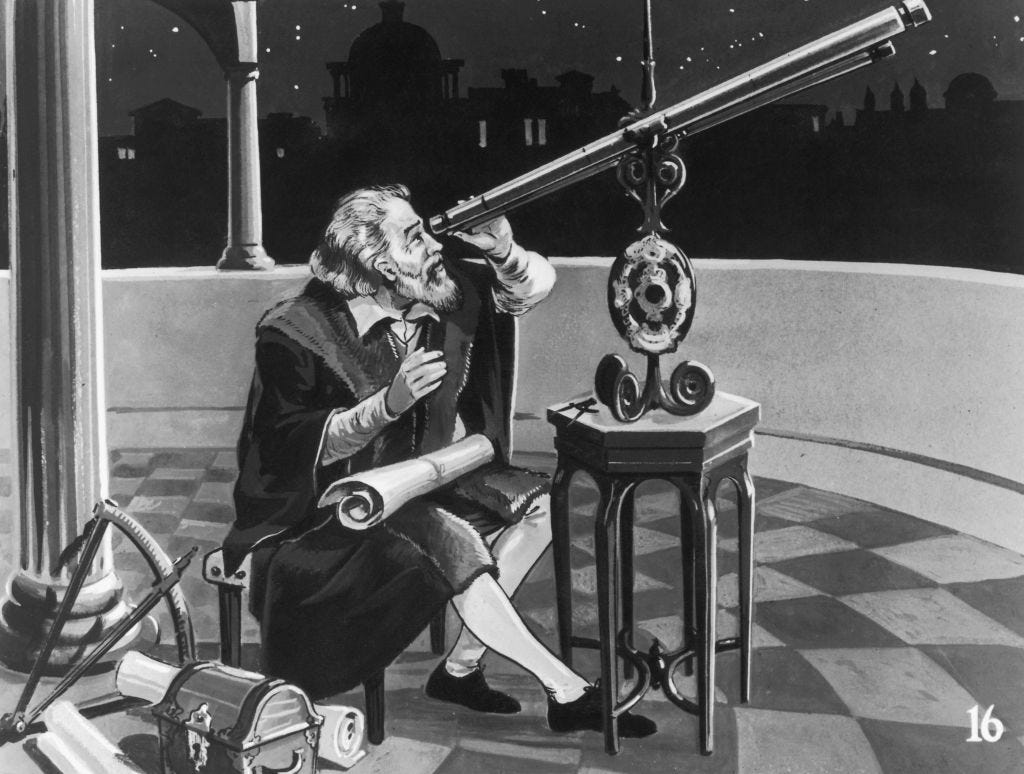

The telescope.

The telescope made the heavens interpretable. We used it to prove the Earth wasn’t the centre of the universe (which to us now sounds like a fact from a textbook, but to Giordano Bruno or Gallileo Galilei in the 16th-17th century, people who said it out loud, it meant their death. (Giordano Bruno was burned at the stake in 1600 for suggesting the stars were other suns. Galileo spent the last years of his life under house arrest.)

The truth was available.

The instrument was there.

But the world,

the world wasn’t ready for it.

We eventually used the telescope for various optimizations to our lives and to discovery:

to map trade routes (which made us wealthier)

to guide weapons more precisely (which made us deadlier)

to track the movements of Jupiter’s moons, (which gave us eventually the first accurate measurement of the speed of light)

All of it came from the same instrument and gave us three completely different futures.

And that’s the pattern. That’s always been the pattern!

When a new way of seeing arrives —> what we do with it depends not on the technology —> but entirely on us.

Now lets fast forward a couple of centuries.

Google just released TimesFM.

A model trained on a hundred billion real-world time-series data points. It predicts, weather, energy systems, economic patterns, disease spread.

Nvidia is building Earth-2. A digital twin of the planet’s entire climate system. Running in real time and getting more accurate every month.

People are already vibe-coding live dashboards of the Strait of Hormuz, and watching geopolitical risk move like weather.

Others are running agent swarms that predict political outcomes on Polymarket before they happen. Someone built a real-time intelligence board that filters world news through an AI reasoning layer and surfaces what actually matters.

In short, instead of 1 telescope, we are now able to create a hundred different instruments.

All of them pointing in the same direction.

We are not just building tools that help us work faster… we’ve come to a moment that we can now building systems that model reality itself.

That look at the present and say: here is what comes next.

And standing on that beach, watching the light change, I kept asking myself the question I haven’t been able to answer since.

Does seeing the future clearly make us better at choosing a different one?

Think about Cassandra.

You probably know the myth of the Trojan princess, gifted by Apollo with the ability to see the future? Then cursed because she refused him, never to be believed.

She saw the wooden horse.

She screamed.

Nobody listened.

And Troy

… burned.

And here is the thing that haunts me about that story, the thing that I think we get wrong when we tell it.

We always focus on the curse.

On the tragedy of seeing and not being heard.

But I believe we should re-focus our attention and inspect this better, or different.

What if being heard wouldn’t have been enough?

What if the Trojans had believed her, fully, completely, without doubt, and still rolled the horse through the gates? Because the general wanted the victory. Because the king didn’t want to appear afraid. Because the crowd had already decided the war was over and they wanted to celebrate.

Knowing the future does not automatically make us better at choosing a different one.

Knowing the future does not automatically make us better at choosing a different one.

I keep repeating it because I keep needing to hear it.

There is research, uncomfortable research, suggesting that when people are presented with very clear, very certain predictions of bad outcomes, they become less motivated to act. Not more.

The certainty produces a kind of grief before the event. A paralysis that wears the mask of acceptance.

We live inside this paradox already.

The climate models have been right for thirty years.

The Arctic ice loss

the frequency of extreme weather events,

the ocean temperature rise

All of these predictions were accurate and sometimes even ahead of schedule, and we still struggle to act at the speed the situation requires.

Not because we don’t know, because if we didn’t we would be lying to ourselves,

we know, we know very well what impact the future can have on our kids, and still so many of us fail to act on it.

So what happens when the predictions get more accurate? When they cover not just climate but economic collapse, political instability, crop failure, conflict zones, pandemic risk… all of it, updating in real time, showing us with 74% confidence what the next five years hold? Does that make us act?

Or does it make us spectators at our own future?

I see three futures from here.

In the first, prediction becomes a tool of control. The organisations and governments with access to the best models use them to stay ahead of consequences. To extract more efficiently. To suppress dissent before it organises. To make decisions that benefit them in the short term while the long-term cost is distributed across everyone else, and across every other species, quietly and unchallenged.

The future becomes readable only to those who can afford to read it, to those who can afford to get access to the… Palantir.

In the second, prediction paralyses us. The probable futures become so vivid, so detailed, so inescapable, that we lose the sense that our choices matter at all. If the model says 74% probability, the human mind hears: already decided and we stop choosing and fall completely into the religion of “Illusion of choice“

We start spectating, spectating our lives from a 3rd person’s perspective.

In the third, the one I find myself hoping for on good mornings, walking on beaches before the light changes, prediction becomes a shared mirror. The systems are open. The data is public and seeing the same future together changes what we choose. Because it makes the consequences of our choices visible in a way nothing else ever could

Visible to everyone.

Equally.

I still believe visibility is the first step towards care.

But here is what I cannot argue my way out of,

The most transformative moments in history did not come from better information.

The abolition of slavery,

The birth of democracy,

The emergence of the environmental movement

The moments when humanity genuinely changed direction, none of them were triggered by a better model of the world. They were triggered by something harder to quantify. Harder to train on a hundred billion data points…

Moral imagination, or the ability to feel the weight of a consequence before it lands. To extend genuine care to people and places and futures you will never personally experience. To look at a system and ask not just “what will happen?” but “what should we refuse to let happen?”

Better information does not automatically produce better humans.

It never has.

And so when I was walking back from the beach and the light was flat, the gold was gone and and I thought:

“What kind of humans do we need to become for all of this to go well?

Should we be smarter? Should we be faster? Should we become better at reading dashboards?

I believe we should be wiser.

We should be more honest about what we don’t know. More willing to sit in uncertainty rather than optimise it away and better at holding multiple futures in mind simultaneously, rather than collapsing everything into the most probable one.

The models reflect the world as it is.

The future we get will depend on who is reading them and what those people, in the privacy of their own choices, actually care about.

That’s not a technical problem.

That’s the oldest human problem we have.

After reading, this, one more poll for you to think about, but I’d be happy to get your ideas on the future, I am also just a person who does my research and tries to make sense of the world so that we have a telescope to navigate through this universe.

THANK YOU FOR YOUR UNCONDITIONAL SUPPORT!

PS: We also help to optimize impact driven organisations to steer AI for the beenfits of humanity. If you know someone who could benefit from having a call or a chat, don’t hesitate to reply to the email or visit “AI For Positive Impact”

Arthur Z.

![AI [re]Generation](https://substackcdn.com/image/fetch/$s_!L6aZ!,w_40,h_40,c_fill,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fd316565c-2791-4f13-a334-efcf2499510d_768x768.png)

Your imagery of "building gods" is striking—ancient entities of calculation and foresight, forged not from myth but from data. As a clinician, though, I find myself looking less at the "instrument" (AI) and more at the "organism" wielding it: us.

You ask if seeing the future clearly helps us choose a different one. History and biology point to an unvarnished but necessary diagnosis.

1. The Deterministic Loop: Our behaviour isn't primarily a failure of "moral imagination." It emerges from a "biological source code" shaped by environments that rewarded survival and resource acquisition above all. When engines like TimesFM or Earth-2 sharpen our view of coming weather, markets, or conflict, they illuminate the path, but they don't automatically rewrite the drivers pushing us down it.

2. Insidious Catastrophes vs. Strategic Realignment: You mention the abolition of slavery and the birth of democracy (suffrage) as triumphs of care. While Walter Scheidel’s The Great Leveler documents how acute catastrophes—wars and collapses—reset power, I believe a more insidious biological mechanism is often at play in these "moral" shifts. For the enslaved or the disenfranchised, systemic suppression eventually threatens fundamental biological imperatives: survival and genetic propagation. The resulting resistance makes the "cost of maintenance" for the system through suppression and instability higher than the cost of reform. The concession of rights is rarely a moral epiphany; it is a strategic realignment of survival by the powerful to preserve the system's long-term homeostasis.

3. The AI Trigger: Today, the barrier to challenging entrenched interests has risen exponentially. Without a genuine "Black Swan" event to disrupt the current alignment of incentives, AI is more likely to serve the survival strategies of existing elites than to force a collective course correction.

4. Ingenuity vs. Wisdom: We went from the Wright brothers to the Moon in just 66 years—and we did it without AI. Building AGI is now a tangible engineering problem. But if history is any indication—from the Haber-Bosch process to the atomic bomb—we have rarely been wise enough to use new technology just as a "cure." We almost always use it as a club as well.

I have spent a great deal of time exploring these "terminal loops" in my book, The Theory of Us: The Final Diagnosis of the Human Species, but the question of agency remains the most challenging part of the prognosis.

What do you see as the most plausible path out of this loop—or do the gods we're building simply amplify what is already hardcoded?

“Illusion of choice“ 🔓